The Reason Your Content Isn’t Getting Indexed on Google Has Been Found – Version Two

- SEO

- Updated on

One of the questions we’ve been asked repeatedly in recent months is why some website pages or newly published articles fail to appear in Google—even after being published and submitted for indexing. Many site owners immediately assume this means their website has been penalized or that something is seriously wrong with Google. In reality, the explanation is often far less alarming.

Over the past few years, Google’s approach to indexing content has evolved. Not every page that gets published is automatically added to search results anymore. Google has become more selective, choosing to index only those pages that truly offer value to users.

In this article, drawing on practical experience, detailed analysis in Google Search Console, and real-world testing, we will clearly explain why content sometimes isn’t indexed and what conditions must be met for a page to earn its place in search results. If you are facing indexing challenges on your own website, this guide will help you better understand what’s happening—and what you can do about it.

Table of Contents

Does Non-Indexing Mean Your Website Has Been Penalized?

One of the first concerns that arises when a new page isn’t indexed is whether the website has been penalized by Google. This worry is completely understandable, but in most cases, non-indexing has nothing to do with penalties.

A penalty—often referred to as receiving a Manual Action from Google—typically results from high-risk practices such as black-hat SEO tactics, spammy link building, widespread duplicate content, or serious security issues. When this happens, Google usually notifies the site owner directly through Google Search Console, and the effects are noticeable: significant traffic loss or large numbers of pages disappearing from search results.

However, when only a small number of pages remain unindexed and the rest of the site performs normally, these warning signs are usually absent.

The Difference Between Non-Indexing and a Google Penalty

Non-indexing simply means that Google has discovered and evaluated a page but decided not to include it in its search database. This decision is not necessarily negative or punitive—it is often a matter of selection.

Every day, Google encounters an enormous volume of new pages. Naturally, not all of them meet the threshold for indexing.

A penalty, on the other hand, is a deliberate action taken in response to violations of Google’s guidelines. When a site is penalized, either the entire domain or a significant portion of its pages typically suffers a sharp drop in rankings or disappears from search results altogether.

So, if only a few specific pages aren’t indexed while the rest of your website remains stable, it is highly unlikely that your site has been penalized.

Why Aren’t All Pages Indexed by Google?

An important reality to accept is that Google is not obligated to index every page published on the internet. Contrary to popular belief, simply publishing content does not guarantee visibility in search results.

Google’s goal is to deliver the most helpful and relevant information to its users. Pages that fail to provide clear added value may never be indexed.

Content that is repetitive, lacks a well-defined focus, does not adequately address user intent, or merely replicates existing pages—either on your own site or elsewhere—generally has a lower chance of being indexed. Even large, authoritative websites contain pages that remain unindexed, either intentionally or naturally, because Google does not consider them essential or beneficial.

Google’s New Perspective on Index Selection

In recent years, Google’s philosophy toward indexing has shifted significantly. Unlike in the past—when many crawled pages were automatically added to search results—today the processes of crawling and indexing are distinctly separate. This means Google may review a page yet ultimately decide not to index it.

The modern approach is built around one central principle: real value for the user.

Pages that clearly solve a problem, provide comprehensive and trustworthy information, and deliver a strong user experience are prioritized for indexing. Meanwhile, pages created merely to expand a website’s content volume, fill gaps, or imitate existing search results often remain in the indexing queue—or are ignored entirely.

For this reason, non-indexing is often a signal rather than a punishment. It signals that it may be time to reassess the page’s quality, purpose, and genuine contribution. Ask yourself: does this content truly add something meaningful to search results, or does it simply add to the noise?

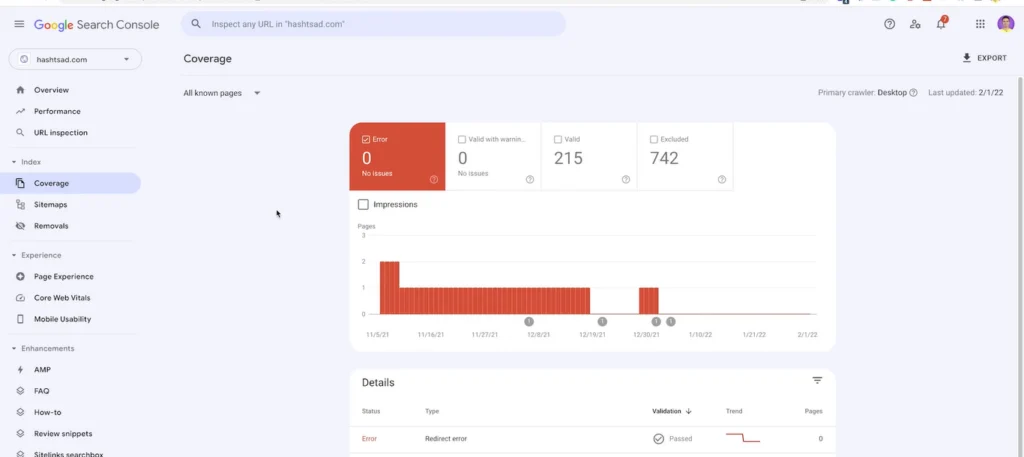

The Right Process for Investigating Non-Indexed Pages in Google Search Console

When a page isn’t indexed by Google, the first and most important step is not guesswork or emotional decision-making—it’s a careful evaluation of the page’s status in Google Search Console. This is the only official tool provided by Google for site owners, offering the most accurate data on crawling activity, indexing status, and potential page-level issues. When you follow the correct diagnostic process, you can usually identify the reason behind non-indexing with clarity.

For any page, the starting point should be the URL Inspection tool. This feature allows you to analyze the exact status of a specific URL from Google’s perspective and determine whether the page has been discovered, crawled, or even considered eligible for indexing.

What Is the URL Inspection Tool and How Is It Used?

The URL Inspection tool enables you to evaluate a specific page on your website exactly as Google sees it. By entering the page address, you can access details such as indexing status, whether Google can reach the page, the presence of technical issues, and even the version of the page that Google last reviewed.

More importantly, the tool does more than simply confirm whether a page is indexed—it explains why it is or isn’t. Many site owners notice that a page hasn’t been indexed and immediately assume the issue lies with Google. In reality, the URL Inspection tool clarifies whether the page was accessible, whether it has been crawled, and whether it meets the necessary criteria for indexing.

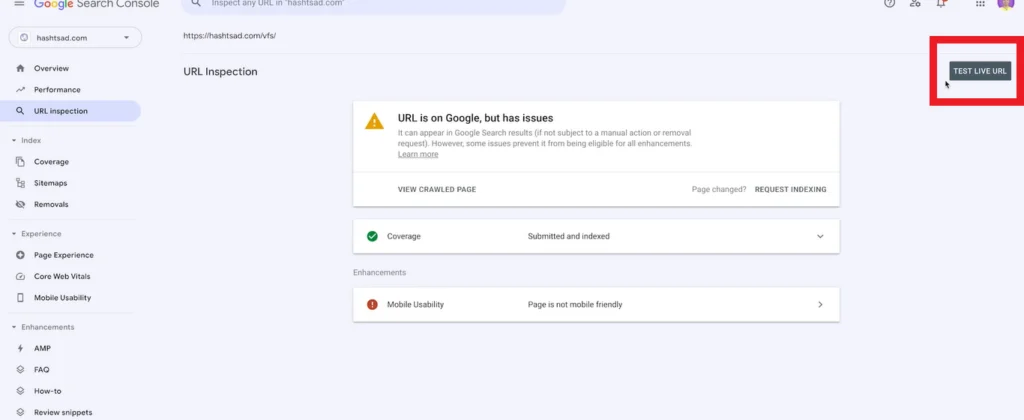

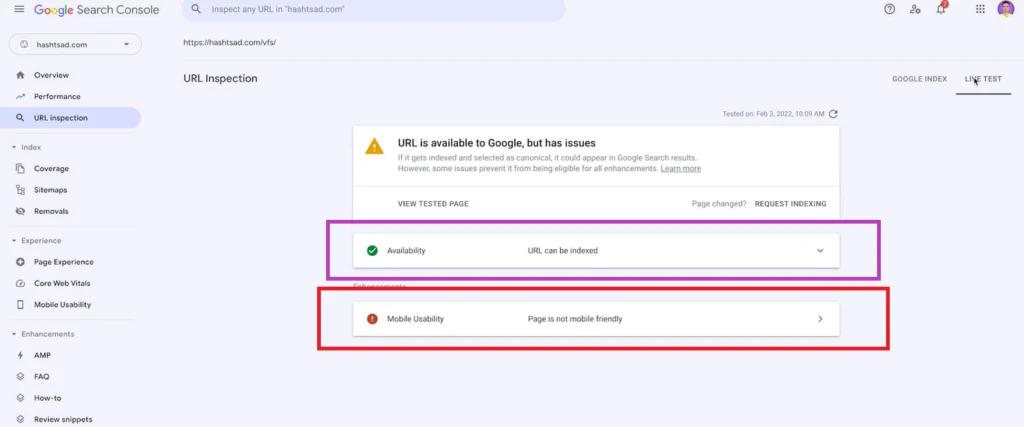

The Difference Between Test Live URL and Index Status

One area that often causes confusion is the distinction between the Test Live URL feature and a page’s indexing status. When you click on Test Live URL, Google checks your page in real time. This test shows whether Google can currently access the page and whether any technical issues are preventing it from being crawled.

However, a successful Test Live URL result does not guarantee that your page will be indexed. The test only confirms that the page is technically sound and that no barriers are blocking Google’s crawler. The final decision to index a page still depends on factors such as content quality, originality, and the value it provides to users.

In simple terms, Test Live URL answers the question, “Can Google see this page?” while the index status answers, “Does Google want to show this page in search results?” These are two entirely separate concepts, and understanding the difference plays a critical role in accurately diagnosing indexing issues.

Interpreting Search Console Messages in Plain Language

The messages displayed within the URL Inspection report in Google Search Console can sometimes lead to misunderstandings if they are not interpreted correctly. For example, a message indicating that a URL is accessible to Google does not mean indexing is guaranteed—it simply confirms that no technical obstacle is preventing the crawl.

In many cases, Search Console provides indirect clues about why a page has not been indexed. Messages stating that a page is eligible for indexing but has not yet been indexed, or that indexing depends on certain conditions, typically indicate that Google has not yet made a final determination about the page’s value.

For this reason, it’s important to evaluate the full set of signals rather than focusing on a single line in the report. By considering accessibility status, live test results, and explanatory messages together, you can determine whether the issue is technical or whether your priority should shift toward improving content quality and page structure. More often than not, Search Console offers clearer insights into indexing issues than many site owners realize—provided you know how to interpret its feedback correctly.

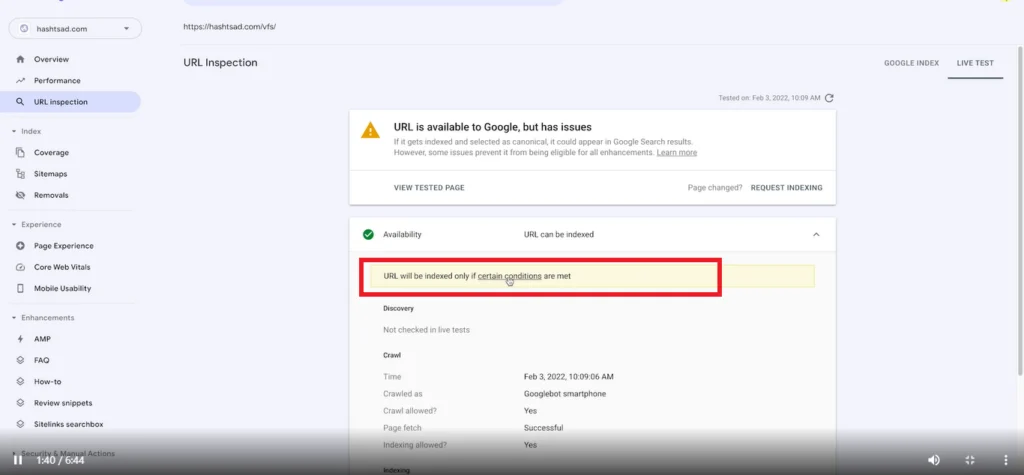

Google’s Important Message

One of the most significant messages you may encounter while reviewing non-indexed pages in Google Search Console is the statement: “URL will be indexed only if certain conditions are met.” At first glance, this sentence might seem straightforward, but it carries a much deeper meaning. Google clearly communicates that a URL will only be indexed if it satisfies specific requirements. In essence, this message reminds us that indexing is not an automatic right—it is a deliberate decision made by the search engine.

What Does This Message Really Mean?

When Google states that a URL will be indexed only under certain conditions, it implies that simply being accessible or free of technical errors is not enough. Google may have already crawled your page, reviewed its content, and found no technical barriers, yet it can still decide not to include the page in search results.

This message indicates that after an initial evaluation, Google has placed the page in a review stage. Its inclusion in the index depends on whether it can pass Google’s quality filters. Put simply, Google is not yet convinced that the page adds sufficient value to search results.

Why Does Google Make Indexing Conditional?

The volume of content published online every day is enormous. Indexing every page would neither be practical nor beneficial for users. Google’s primary objective is to deliver the most relevant and high-quality results, and achieving this goal requires selectivity.

Conditional indexing allows Google to prioritize resources for pages that provide genuine value. Pages that are duplicate, low-quality, unfocused, or merely weaker versions of existing content often fail to move forward at this stage. As a result, Google may crawl such pages but choose not to index them in order to maintain the overall quality of search results.

Three Key Requirements for Page Indexing

Based on Search Console insights, official documentation, and industry experience, three primary conditions influence whether a page gets indexed:

1. No Security Issues or Manual Actions

A page should not be affected by security problems, policy violations, or manual penalties. If a website has been flagged for security risks, manipulative practices, or guideline breaches, even otherwise healthy pages may struggle to gain index eligibility.

2. Content Uniqueness

Google emphasizes the importance of originality. A page should not be a duplicate or overly similar to another page. When multiple pages offer little differentiation, Google typically selects one canonical version and ignores the rest.

3. Overall Page Quality

Perhaps the most critical factor is the page’s overall quality. Google expects content to be valuable enough to justify indexing. This goes beyond written text—it includes content structure, user experience, visual elements, search intent alignment, and how effectively the page meets user needs.

Google’s First Rule

The most fundamental requirement for page indexing is maintaining a secure website and fully complying with search engine guidelines. If Google determines that a site or page could pose a risk to users or violates its policies, the indexing process may be halted—often before content quality is even evaluated. For this reason, security issues and manual penalties are among the most common causes of non-indexed pages.

What Are Manual Actions?

A Manual Action occurs when Google’s human review team directly evaluates a website and concludes that it does not adhere to webmaster guidelines. Unlike algorithmic decisions, which are automated, a Manual Action results from a deliberate human assessment and is typically communicated clearly through Google Search Console.

Manual Actions can stem from various issues, including unnatural link building, spam practices, large-scale copied content, or deceptive techniques intended to manipulate search rankings. When a site receives a Manual Action, Google may stop indexing new pages or even remove existing ones from search results. Until the issue is fully resolved and a reconsideration request is submitted, consistent indexing should not be expected.

The Impact of Black Hat SEO and Security Issues on Indexing

Black hat SEO tactics are one of the fastest ways to lose Google’s trust. Practices such as spammy link building, hidden content, keyword stuffing, or automatically generated text can signal that a site is unreliable. In such cases, even pages that appear technically sound may be excluded from the index.

Security problems also have a direct influence on indexing. Malware, malicious scripts, suspicious redirects, or injected spam links can prompt Google to restrict crawling and indexing in order to protect users. Search Console typically displays explicit security warnings in these situations, and ignoring them can disrupt the indexing performance of the entire website.

The Hidden Risk of Nulled Themes and Plugins

One overlooked factor behind indexing issues is the use of nulled or pirated themes and plugins. These resources often contain malicious code, hidden backdoors, or unknown scripts that operate without the site owner’s awareness.

Google is highly effective at detecting such threats. Once a website is identified as unsafe, trust declines—and with it, the likelihood of indexing. Consequences may include new pages failing to index, older pages gradually disappearing from search results, or security warnings appearing alongside your listings. Using legitimate, regularly updated software is therefore critical not only for security but also for maintaining stable, long-term indexability.

When a website is secure and free from penalties or violations, Google approaches its pages with greater confidence. This creates a strong foundation for the next stages of content evaluation.

Google’s Second Rule

The second major rule for indexing revolves around duplicate content and excessive similarity between pages. Contrary to popular belief, Google is not only concerned with word-for-word copies—pages that closely resemble each other in purpose, structure, or messaging can also fall into this category. As a result, many websites unintentionally reduce their indexing potential by publishing overlapping pages.

How Google Defines Duplicate Content

From Google’s perspective, duplicate content refers to material that is fully or substantially similar to content already available on the same site or elsewhere. This similarity may exist in the text, structure, titles, descriptions, or even the underlying intent of the page.

Google aims to present the most relevant version for each topic and typically indexes only one of several similar pages. Importantly, duplicate content does not automatically lead to penalties, but it does create uncertainty about which page deserves visibility. In some cases, the page you expect to be indexed may be overlooked, another version may be selected as canonical, or neither page may perform well in search results.

Similar Content—Even With Different Titles

A common misconception is that changing a page title or swapping in synonyms resolves duplication issues. In reality, Google evaluates content semantically rather than relying solely on surface-level wording. If two pages address the same topic with comparable structure and insights, they may still be recognized as duplicates despite having different titles.

For instance, pages targeting closely related transactional keywords may appear distinct but offer nearly identical value. In such cases, Google usually prioritizes one page while the other struggles to gain indexing traction. True uniqueness must exist in depth, perspective, and value—not just in phrasing.

The Challenge of Overlapping Product or Service Pages

Many websites create multiple pages for a single product or service—perhaps one focused on introduction and another on purchase—yet provide nearly identical descriptions on both. This overlap makes it difficult for search engines to determine which page should be indexed.

Google may index one page and ignore the other, or it may interpret both as low-value. The solution is clear differentiation of purpose. Each page should serve a distinct role and present content aligned with that objective so that search engines can easily understand their differences.

The Role of Canonical Tags

Canonical tags are an essential tool for managing duplicate or highly similar content. By specifying a canonical URL, you signal which version should be treated as the primary page. This reduces confusion and helps search engines focus on the correct URL.

However, canonicalization alone is not a cure-all. If pages remain excessively alike, even a declared canonical version may struggle to index. The most effective strategy is prevention: avoid creating redundant pages whenever possible, and ensure that each one delivers unique value with a clearly defined purpose.

Ultimately, this rule underscores that Google prioritizes diversity and meaningful contribution over repetition. The more distinct your pages are in content, structure, and intent, the higher their chances of being indexed.

Google’s Third Rule (The Most Important)

Among all factors that influence indexing, the third rule is both the most critical and the most challenging. A page may be technically flawless, free of duplication, and hosted on a secure website—yet Google can still choose not to index it. The reason is straightforward: the search engine has not determined that the page provides sufficient value.

In recent years, Google’s focus has shifted from the quantity of pages to the quality they deliver. Rather than filling search results indiscriminately, Google aims to present the best possible answer. Consequently, content quality has become a decisive filter for indexing.

What Does High-Quality Content Really Mean?

High-quality content is not defined by length or keyword density. Instead, it is content that fulfills a specific user need clearly, thoroughly, and credibly. Google evaluates whether a page genuinely enhances a user’s understanding or decision-making—or merely repeats existing information.

Strong content typically has a well-defined purpose, logical structure, and leaves readers feeling that their question has been answered. Signals such as expertise, authority, and trustworthiness also contribute to perceived value. If content is superficial, overly generic, or lacks original insight, it may struggle to qualify for indexing—even if it appears optimized.

Long Content vs. Valuable Content

A widespread misconception is that longer articles automatically perform better. In reality, Google has no obligation to index lengthy pages. Content padded with repetition, filler explanations, or shallow details rarely creates meaningful value.

A shorter piece that is precise, practical, and aligned with user needs can outperform a much longer one. Depth, clarity, and usefulness—not word count—are the metrics that matter most.

The Role of User Experience, Structure, and Depth

Content quality extends beyond text. User experience plays a significant role in how Google evaluates a page. Poor structure, difficult readability, or high bounce rates can all send negative signals.

Clear hierarchy, effective headings, thoughtful formatting, strong readability, and purposeful visuals help demonstrate that a page is designed for people rather than algorithms. Depth is equally important: content that explores a topic from multiple angles and avoids incomplete answers stands a far better chance of being indexed.

Why Are Some Pages Crawled but Not Indexed?

A frequent question is why Google crawls certain pages but does not index them. The answer often lies in this third rule. Crawling means the page has been discovered and analyzed; non-indexing means it was ultimately deemed insufficient for search results.

Often, Google concludes that the page lacks unique value, provides only surface-level insight, or does not pursue a clear objective. This is a normal outcome and reflects Google’s selective approach rather than an error.

Additional Reasons Pages May Not Be Indexed

Beyond the three core rules, several less obvious factors can influence indexability. These often relate to the page’s true value, strategic purpose, and position within the broader site architecture.

Thin Content

Thin content refers to pages that offer minimal substance or lack meaningful added value. Such pages rarely satisfy user needs, and while Google may crawl them, it often determines that indexing would not improve search quality.

Thin content is not strictly about length—it is about purpose. Even long pages can qualify if they rely on generic, repetitive information without delivering actionable insight.

Misalignment With Search Intent

One of the most significant causes of non-indexing is a mismatch between page content and user intent. Google prioritizes pages that directly align with what users are actually searching for.

For example, if users seek educational material but encounter heavily promotional content—or if they intend to make a purchase but find only broad descriptions—Google may view the page as ineffective. Even well-written content can be overlooked if it fails to match expectations.

Weak Internal Linking

Internal linking is a critical signal that helps search engines understand which pages matter most. Pages that receive little or no internal linkage—or are poorly integrated into site architecture—can appear unimportant.

When a page exists in isolation, Google may interpret it as secondary. Effective internal linking clarifies relationships between pages and strengthens indexing priority.

Pages Without Independent Value

Certain pages inherently lack standalone value. Tag archives, filtered listings, internal search results, or similar aggregations often provide little original content. As a result, Google frequently chooses not to index them.

If left unoptimized, these pages can also waste crawl budget. It is important to decide whether they should be indexed and, if so, enhance them with meaningful, targeted content.

Poor Translation or Superficial Rewriting

Content that is directly translated from external sources or lightly rewritten tends to have limited indexing potential. Google can readily identify material that fails to introduce new insights.

Superficial rewriting—where only wording changes but structure and meaning remain identical—offers little distinction from copied content. To improve indexability, content should reflect genuine expertise, audience awareness, and original perspective.

Together, these factors highlight that non-indexing rarely stems from a single issue. Often, a combination of subtle weaknesses leads Google to classify a page as low value. Attention to these details can significantly improve indexing rates.

A Quick Checklist for Diagnosing Indexing Issues

If you want to quickly determine why a page is not indexed, start with a few essential checks:

- Look for similar pages: Multiple overlapping pages often compete, and Google typically selects only one.

- Review internal links: Pages that are well-connected within the site structure signal importance.

- Evaluate real user value: Does the content solve a problem, provide reliable information, and fully address user needs?

Ask yourself a simple question: Does this page truly deserve a place in search results?

If the answer is yes, focus on technical improvements, content quality, and internal linking. If not, consider revising the page to strengthen its value proposition.

Conclusion

Pages that fail to index are not necessarily a sign of failure. In most cases, the cause lies in overlooking one or more of Google’s core principles rather than any fault within the search engine itself. Through careful analysis, higher content standards, and adherence to best practices, your pages can significantly improve their chances of appearing in search results.

Practical experience shows that even large, authoritative websites occasionally struggle with indexing due to minor issues. However, targeted optimizations can lead to faster and more sustainable indexing outcomes.

If you have encountered indexing challenges or discovered effective solutions, consider sharing your insights in the comments. Exchanging experiences helps the entire digital community navigate these challenges more successfully—and ultimately contributes to a richer, more valuable web ecosystem.

Ahura WordPress Theme

The Power to Change EverythingElementor Page Builder

The most powerful WordPress page builder with 100+ exclusive custom elements.

Incredible Performance

With Ahura’s smart modular loading technology, files load only when they are truly needed.

SEO Optimized for Google

Every line of code is carefully aligned with Google’s algorithms and best practices.

To post a comment, please register or log in first.